What is Calibration? Complete Beginner Guide (With Practical Examples)

Before we define what calibration means, I want you to understand the below scenario:

When you go to the market and buy some meat, you bought 1 kilo and pay for it with your hard-earned money but when you check it at home, it is only ¾ of a kilo, what would you feel?

You just gassed up good for 2 days as your routine but only a day had passed and your meter indicates near-empty – would this not make you mad?

You are baking a cake and the instruction tells you to set the temperature to 50 degrees Celsius for 20 minutes,

You are baking a cake and the instruction tells you to set the temperature to 50 degrees Celsius for 20 minutes,  you followed the steps and specifications but your cake turned into charcoal – will you not be upset?

you followed the steps and specifications but your cake turned into charcoal – will you not be upset?

Now, what do you feel if the above scenario happens to you? Of course, you may get angry, dismayed, or worse complain about the services or products that you received and paid for, all because of the effect of the un-calibrated Instruments.

This is why calibration is important in our daily life not just inside a laboratory or within the manufacturing aspect. There is the involvement of quality, safety, and reliability.

Now let us define What calibration is.

Basics of Calibration

There are definitions of Calibrations by NIST or ISO that you can look for. But to easily understand, below is a simple calibration definition:

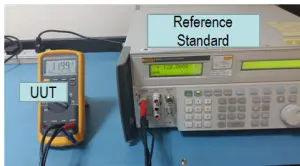

Calibration is simply the comparison of Instrument, Measuring and Test Equipment (M&TE), Unit Under Test (UUT), Unit Under Calibration (UUC), a Device Under Test (DUT), or simply a Test Instrument (TI) of unverified accuracy to an instrument or standards with a known (higher) accuracy to detect or eliminate unacceptable variations. It may or may not involve adjustment or repair.

It is making the instruments perform what it displays by referencing or adjusting it based on a Reference Standard.

Simply,

- to ensure that you get what you have paid for;

- Satisfy your expectations;

- Create win-win situations

In daily operations, Calibration Means:

-

Accuracy Check: Calibration is like a health checkup for measuring tools. It’s the process of making sure that instruments like thermometers or scales are giving you accurate and reliable measurements.

-

Reference Comparison: Imagine you have a ruler, but you’re not sure if it’s exactly 12 inches long. Calibration is like comparing it to a known, precise ruler to confirm its accuracy.

-

Adjustment: Sometimes, instruments may drift and give slightly incorrect readings over time. Calibration involves fine-tuning or adjusting them to ensure they’re spot-on.

-

Standardization: It’s like setting the rules for a game. Calibration involves using standardized methods and reference points to ensure that measurements are consistent and can be trusted.

-

Trust Assurance: Calibration is about building trust in measurements. When something is calibrated, it means you can rely on it to give you trustworthy data, which is crucial in fields like science, engineering, and manufacturing.

What is a Reference Standard?

A reference standard is also an Instrument, or equipment, or measuring device with the highest metrological quality or accuracy than the Unit Under Calibration (UUC).

It is where we compare the UUC reading and where measurement values are derived. It is also calibrated, but by a higher-level laboratory with traceability to a higher standard (See traceability below).

The reference standard is also known as the Master Standard. Other terms that I sometimes hear, refer to it as Master Calibrator or simply ‘calibrator’.

Why Calibrate – Reasons for Calibration

There are so many reasons why we need to perform a calibration. Some reasons are:

- For public or consumer protection, like the example above, to get the value of the money we spend on a product or service.

- For a technical reason, we need to calibrate because as components age or equipment undergo environmental or mechanical stress, its performance gradually degrades.

This degradation is what we call the ‘drift‘. When this happens, the results or performance generated by certain equipment will be unreliable where design and quality suffer. We cannot eliminate drift, but through the process of calibration, it can be detected and contained. - There are also practical reasons for implementing calibration. Calibration will eliminate doubts and provide confidence when we encounter the below situations with our instruments:

- when there is a newly installed or purchased instruments

- instruments that are mishandled during transfer (for example: dropped or fell)

- when instrument performance is questionable

- calibration period is overdue

- kept to an unstable environment for too long (exposed to vibrations or too high/low temperatures)

- when a new setting, repair, and/or adjustment is performed

While detecting an inaccuracy is one of the main reasons for calibration, some other reasons are:

- Customer requirements – they want to ensure that the product they buy is within the expected specifications.

- Requirements of a government or statutory regulations – they want to make sure that products produced are safe and reliable for the public

- Audit requirements– as a requirement for achieving a certification like ISO 9001:2015 certification and ISO 17025 accreditations

- Quality and Safety requirements– a reliable and accurate operation through proper use of inspection instruments provides a great deal of confidence for everyone

- Process requirements – to ensure that the product produced is the most accurate and reliable, some operations will not be executed unless the equipment has passed the calibration and verification process, and used in equipment or product qualifications as part of quality control.

Importance of Instrument Calibration

- To establish and demonstrate traceability (I will explain the traceability below). Through calibration, the measurement established by the instrument is the same wherever you are, it means that a 1 Kg of weight in one place is also 1 Kg in other places or wherever it reaches. You can use instruments regardless of the units or parameters they measure on different occasions.

. - To determine and ensure the accuracy of instrument readings (through calibration, you can determine how close the actual value is to the true or reference value) – resulting in product quality and safety

. - To ensure readings from the instrument are consistent with other measurements. This means that you have the same measurement results regardless of what measuring instrument you use that is compatible in the process.

. - To establish the reliability of the instrument making sure that they function in the way they are intended to be – resulting in more confidence in the expected output.

. - Provides customer satisfaction by having a product that meets what they have paid for – high quality of a product.

What needs calibration?

- All inspection, measuring, and test equipment that can affect or determine product quality. This means that if you are using the instruments to verify the acceptance of a product, whether to pass or fail based on the measured value you have taken, the instrument should be calibrated.

. - Equipment that, if out of calibration, would produce unsafe products.

.

Different kinds of instruments for calibration - Equipment that requires calibration because of an agreement. An example is a customer, where before progressing into a contract, they need to ensure that the equipment that will produce their product is calibrated.

. - All measuring and testing equipment (standards) affecting the accuracy or validity of calibrations. These are the master standards, go-no-go jigs, check masters, reference materials, and related instruments that we used to verify other instruments or measuring equipment for their accuracy.

What does a calibrated Instrument Look like?

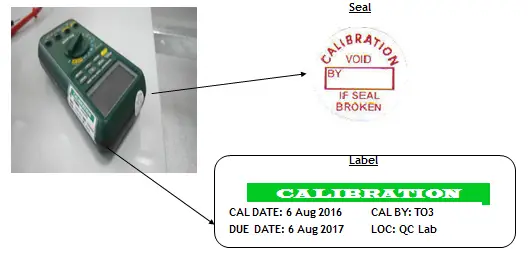

When you have your measuring instruments calibrated, see to it that it has a calibration label where details of its calibration

date and due date are seen, also includes a serial number, certificate number, and person-in-charge of the calibration which depends on the calibration lab. Also, if needed, a ‘void’ seal is placed to protect it from any unauthorized adjustment.

A calibrated Instrument with labels is useless if the calibration certificate is not available, so be sure to keep it safe and readily available once requested.

Be cautious to check calibration certificates once received; not all calibrated instruments perform the same as you expected; some have a limited use based on the result of the calibration. you must learn how to read or interpret the results in a calibration certificate.

When is Calibration Not Required?

Every measuring instrument needs calibration but not all measuring instruments are required to be calibrated. Below are some of the reasons or criteria to consider before having an instrument calibrated. This may save you some time and money.

-

-

- It is not critical in your process ( just to display a certain reading for the purpose of functionality check).

- It functions as an indicator only (for example, high or low and close or open).

… - As an accessory only to support the main instrument. For example, a coil of wire is used to amplify current. Current is measured, but the amplification is not that critical, used as an accessory only to amplify a measured current.

. - Its accuracy is established by a higher or reference to a higher or more accurate instrument within a group. (for example, a set of pressure gauges that are connected in series, in which one of them is a more accurate gauge, where they are compared or referenced to).

. - If the instruments are verified regularly or continuously monitored by a calibrated instrument that is documented in a measurement assurance process. For example, a room thermometer that is verified by a calibrated thermometer regularly.

. - If the instrument is a part of a system or integrated into a system, the system is calibrated as a whole. For example, a thermocouple that is permanently connected to the oven (some thermocouples are detached after usage and then transferred to other units).

-

Please visit this link for more details regarding this topic.

How To Perform an Adjustment of Calibration Interval

Every measuring instrument needs calibration, and every calibrated instrument needs to be recalibrated. This means that there is a due date for a recalibration period that we need to establish.

This recalibration period is a scheduled calibration that is based on the calibration interval that we set. Before we send our instruments for calibration, we need to set in advance our initial interval or our final fixed interval. This will be communicated with the calibration lab.

These initial calibration intervals may be based on the following aspects:

-

- Manufacturer Requirements – recommended by the manufacturer

- On the frequency of use – the more it is used, the shorter the calibration interval

- Required by the regulatory bodies (for example, required by the government)

- Experience of the user with the same type of instrument

- Based on the criticality of use. – More critical instruments have higher accuracy or very strict tolerance, therefore shorter calibration interval

- Customer Requirements

- Conditions of the environment where it is being used.

- Published Documents

Now that we have an initial calibration interval, we need to set the fixed calibration interval based on the performance of our instruments. We will review the performance by gathering all the calibration records and plot it in a graph to study its stability or the drift encountered by the instruments.

Based on this performance history, we can decide if we need to extend or reduce the calibration interval.

I have shown how to do this in this link >> CALIBRATION INTERVAL: HOW TO INCREASE THE CALIBRATION FREQUENCY OF INSTRUMENTS

..

Basic Calibration Terms and Principles

What is the Accuracy of an Instrument?

Accuracy is a reference number (usually given in percent (%) error) that shows the degree of closeness to the true value. There is a true value, which means that you have a source of known value to compare with.

The closer your measuring instrument reads or measures to the true value, the more accurate your instrument is. Or, in other words, “the smaller the error, the more accurate the instrument”.

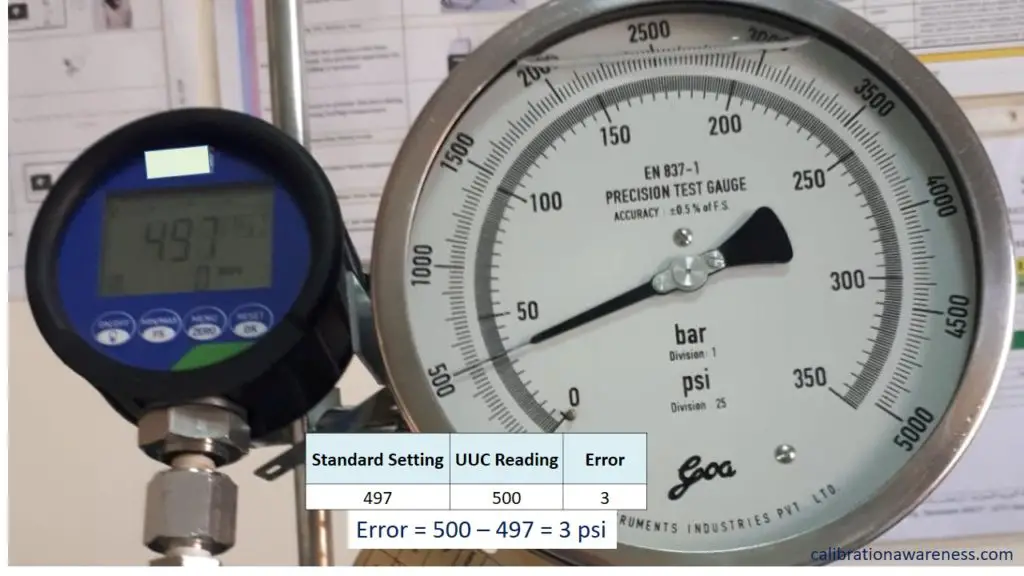

Error or Measurement Error – as per JCGM 200:2012, refers to the measured quantity value minus a reference quantity value. In simple terms, this is the UUC displayed reading minus the STD reading.

How to calculate the accuracy of measurement?

To find the value of accuracy, you need to calculate the error, and to gauge the “degree of closeness to the true value”, you need to calculate the % error.

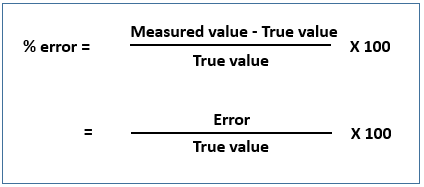

To calculate the error and % error, below are the formulas:

Error = Measured value – True value

And for the percent error:

% error = Error/True value x 100

We are performing a calibration to check for the accuracy of instruments, and to determine how close (or far) the reading of our instruments is compared to the reading of the reference standard.

Where can we find the Accuracy of Instruments?

- Original Equipment Manufacturer (OEM) Specifications in manual or brochure

- Publish standards or handbook

- Calibration Certificates

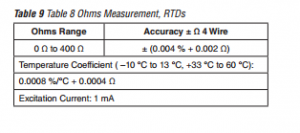

When calibrating, make sure that the reference standard has higher accuracy than the Unit Under Calibration (UUC), usually, a good rule of thumb is to have an accuracy ratio of 4:1.

This means that if your UUC has an accuracy of 1, the reference standard to be used should have at least a 0.25 accuracy, four times more accurate than the UUC (1/0.25 =4). To make it more clear, since most of the time, accuracy is expressed in percentage (% error), the closer the value is to ZERO, the more accurate the instrument.

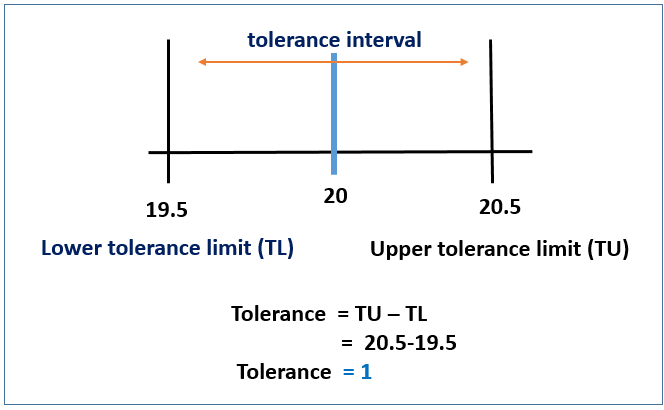

What is the Tolerance of Instruments?

Tolerance is closely related to accuracy at some point but for clarity, it is the permissible deviation or the maximum error to be expected from a manufactured component and expressed usually in measurement units (examples are psi, volts, meter, etc.).

As per JCGM 106:2012:

Tolerance = difference of upper and lower tolerance limits

Tolerance limit = specified upper or lower bound of permissible values of a property

Tolerance Interval = Intervals of permissible values

For example:

A pressure gauge with a full-scale reading of 20 psi with a tolerance limit of +/- 0.5 ( 20 +/-0.5) psi has a tolerance of 1 psi, see the below photo.

After calibration, we should perform a verification to determine if the reading is within the tolerance specified, it may be less accurate but if it is within the specified tolerance limit, it is still acceptable. This is where a pass-or-fail decision can be brought out.

A pass-or-fail decision during verification is best applied by using a “Decision Rule” for a more in-depth assessment of conformity.

The tolerance needed should be determined by the user of the UUC, which is the combinations of many factors includes:

- Process requirements

- The capability of measurement equipment

- Manufacturer’s tolerance specifications (Related to accuracy)

- Published Standards

See more explanation and presentation in this link >> Differences Between Accuracy, Error, Tolerance, and Uncertainty in Calibration Results

What is Precision in Measurement?

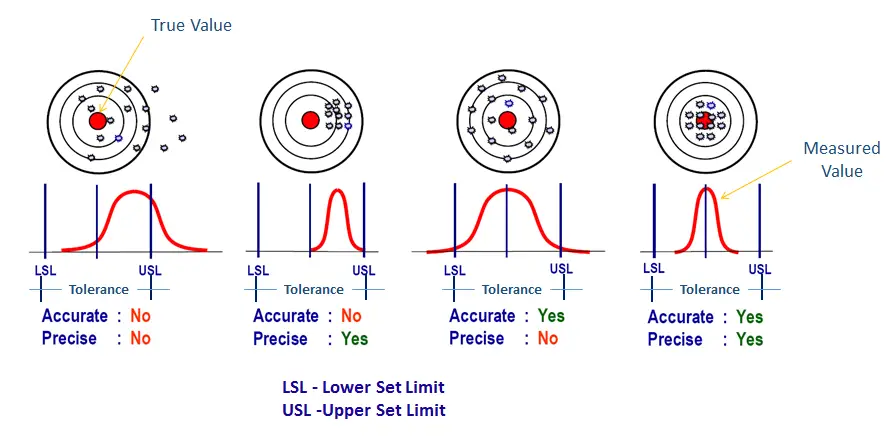

Precision is the closeness of repeated measurement to each other. Precision signifies good stability and repeatability of instruments but not accuracy.

A measuring instrument can be highly precise but cannot be accurate. Our goal during calibration for a measuring instrument is to have good accuracy and precision. Precision can be determined without using a reference standard.

How to Determine Precision in Measurements?

Precision is closely related to repeatability, you cannot determine the precision of you cannot have repeated measurements.

In order to calculate and determine ‘precision’, follow the below steps:

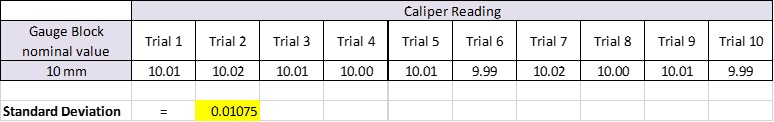

1. With the same method of measurement, get a repeated reading. For example, a digital caliper measuring a 10 mm gauge block (or any stable material) for 10 times. See below table.

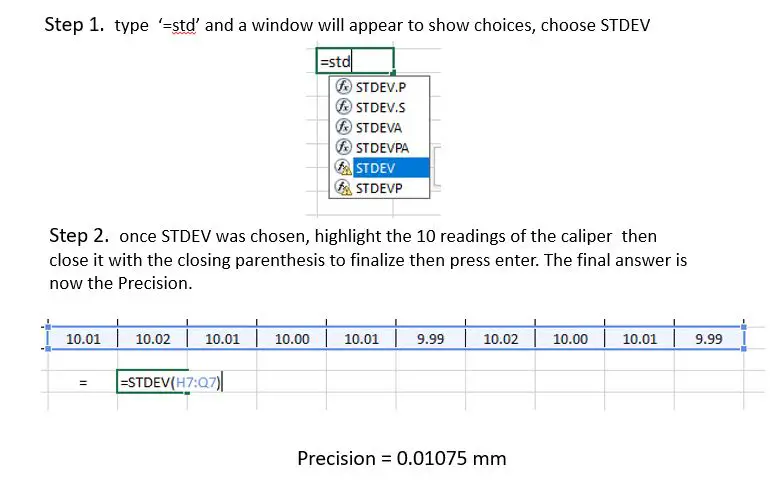

2. using the Excel worksheet (this will simplify and make the calculation easy) plot all the measured points and calculate the standard deviation. Follow the below instructions to calculate in Excel.

The smaller the value, the more precise the instrument.

2..

Below are the relationships of Accuracy, Precision, and Tolerance to better understand:

What is Stability and How to Determine Stability?

Stability is the ability of the instrument to maintain its output within defined limits over a period of time. A highly stable instrument can be determined by collecting its output data in a fixed interval.

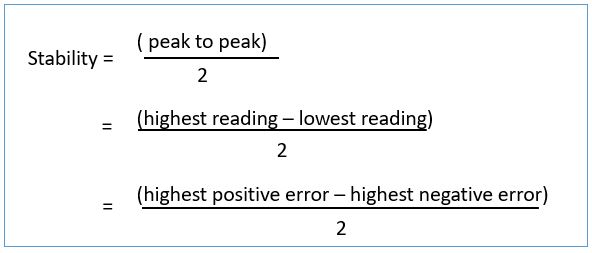

There are many ways to do it but I will share the most basic and simple to do. Just remember that the goal for stability calculation is to determine that the instrument or standard is functioning within specifications on a defined period.

..

Use this formula:

For example:

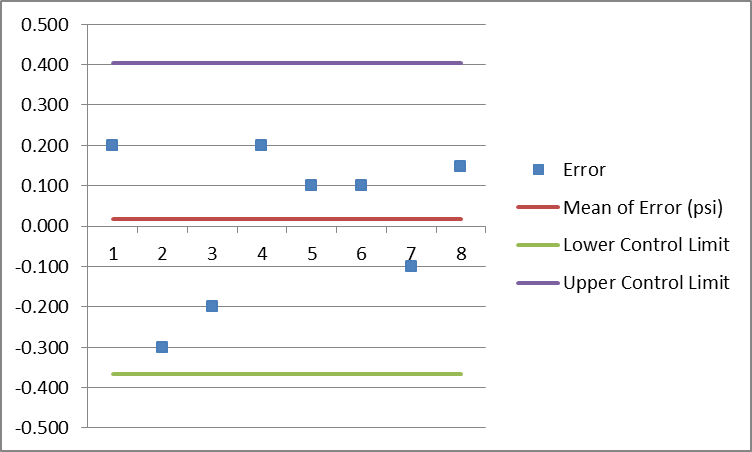

To determine stability, refer to the control chart below (or the error part on the table). Observe the peak-to-peak value (the highest and the lowest value in the control chart below) then perform the below calculation.

Highest positive error = 0.2

Highest negative error = -0.3

Stability = [0.2 -(-0.3)]/2 = 0.25

Therefore, stability = 0.25 psi

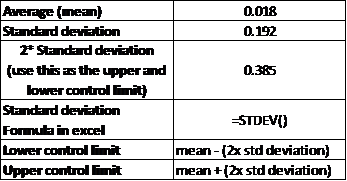

Another method is by determining the standard deviation. If you are using an Excel sheet, this is the simplest to calculate.

Basing it on the table below, just use the formula =STDEV() (see also Excel calculation of precision above) then highlight or choose the ‘UUC Actual Value’. A standard deviation equal to ‘0.192’ will be calculated as the ‘stability’.

Take note that the smaller the value of ‘stability’, the more stable the instrument is. You can also compare this value to the specification of the instrument, which usually can be found on its user manual.

The above calculated ‘stability value’ can also be used during the calculation of measurement uncertainty (source of uncertainty) as one of the component in the uncertainty budget.

..

How to Monitor Stability?

Below are the things that you can do to monitor the stability of your reference standard.

1. Collect all the past calibration certificates of your reference standards to be evaluated, the more the better. Check the data results.

2. Stability is determined by collecting data on a fixed interval. This could be data from your intermediate check. It can be daily, monthly, or every 3 months.

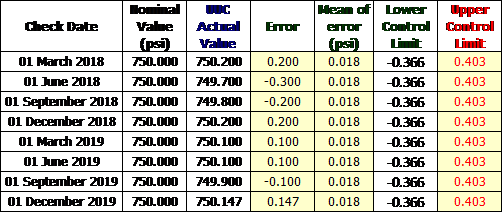

3. Organize your data on a table, see the below example.

I have collected data through an intermediate check performed every three months on a Test Gauge.

Below are the data.

Determine the error, calculate the mean, and the standard deviation

Plot in the control chart.

As long as the error is within the control limit, we can be sure that the reference standard is very stable and in control.

What is a Drift and How to Determine Drift in Calibration Results?

If a measuring instrument has almost the same measurement output every time you compare its calibration data during re-calibration, we refer to its performance as ‘stable’ or it has good stability as presented above.

But what if the measurement output changes over time where it is reaching the tolerance limit every time we perform a scheduled verification or plotting it in the control chart for performance monitoring? If this is the case, then we can say that our instrument is ‘drifting’, the opposite of a stable reading.

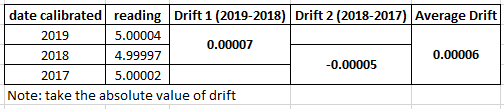

‘Drift’ is the change in output reading of instruments, it is the variation of the performance over time which can be observed by comparing all the calibration results from its calibration history. It is simply the difference between the past result from the present result which can be taken from the UUC’s calibration certificate.

How do we calculate drift? Choose a specific range that you need then summarize it in a table. Subtract the present value with the past value. See the example below:

A drift in our Standards or UUC is normal as long as it is within the acceptable limits and it is under control. Monitoring drift can be the same as the control chart for ‘stability’ that is presented above.

A drift can only be determined through the process of calibration. This is one main reason why we need to perform calibration. If we monitor and control drift, then we are confident that our Instruments are performing well.

..

Traceability in Calibration

As stated, calibration is the comparison of an instrument to a higher or more accurate instrument. These higher accuracy instruments are called the reference standard and are sometimes also known as the calibrator, a master, or a reference.

This reference standard that is used to calibrate your instrument also has a more accurate master standard being used to calibrate, which is also linked to a much higher standard until it reaches the main source or SI.

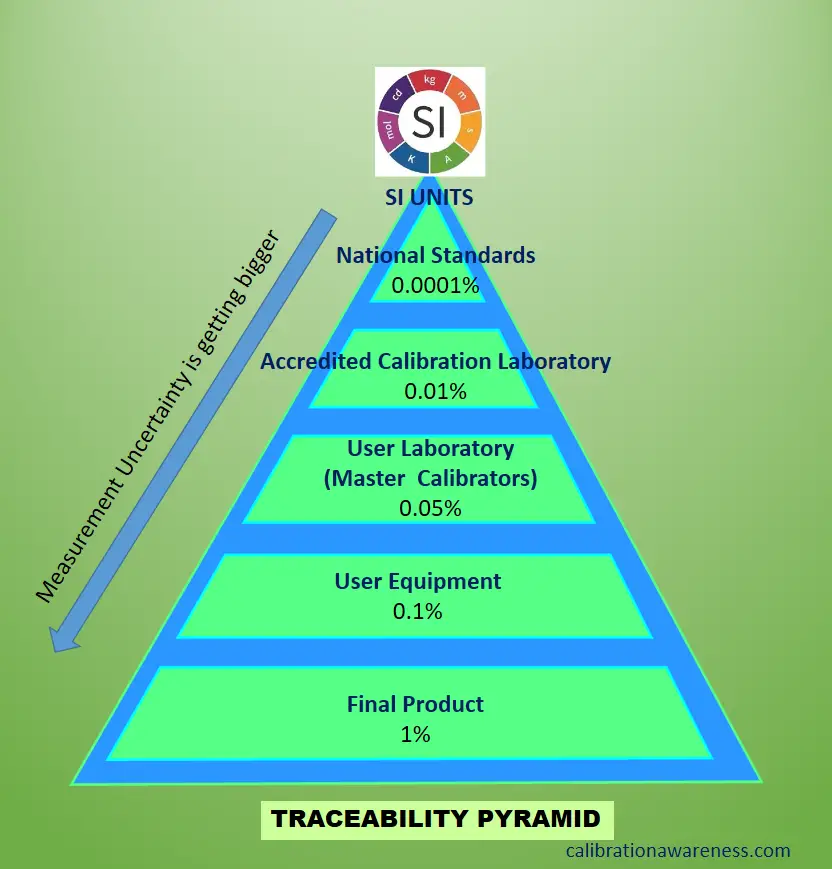

There is an unbroken chain of comparison being linked from top to bottom of the chain. It is passed to local from international standards, the topmost source of traceability in the comparison chain (as shown in the figure above).

This means that the 1 kilogram you used is also 1 kilogram no matter where you go. There is unity in every measurement. Traceability can be determined through its calibration certificate, indicating the results and reference standard used to calibrate your instrument.

Why is Traceability in Measurements Necessary?

- For companies engaged in manufacturing and engineering, it ensures that parts produced or supplied have the same or acceptable specifications when used by customers anywhere. Compatibility is not an issue.

- Traceability provides confidence to our measurement process because the validity of the measurement results is ensured for its accuracy.

- Traceability has a value; this value can be seen in a calibration certificate as the measurement uncertainty results. With these results, you can determine how accurate the measurement instruments are.

- A requirement by relevant laws and regulations to guarantee product quality.

- A requirement from a contract agreed by two parties (contractual provisions)- a traceable calibration

- Statutory requirements for safety – even though we have different units of measurement, we are confident that compatibility in terms of size, form, or level is not an issue anywhere it goes.

Where can we find the Traceability Information of a Calibrated Instrument?

Since traceability is very important, we should know how to determine or check the traceability information of certain calibrated instruments. We can find it through its calibration certificate, one of the check items in a calibration certificate, once it is calibrated by an authorized laboratory.

In a calibration certificate, there is traceability information written usually in the middle part together with the reference standard used or at the bottom, and or even both.

This is a requirement so it must not be neglected if the laboratory is a competent one. Moreover, the most important one is the result of its measurement uncertainty; it should be reflected in the data results to ensure that you have a traceable calibration done by an accredited laboratory.

For a deeper explanation with evidence of traceability that you need to know, please visit my other post at this link.

..

What are the Differences Between Calibration, Verification, and Validation?

Calibration, verification, and validation are the terms that are most confusing if you are not aware of their differences and true meaning when it comes to the measurement process.

To differentiate these terms, below are the main points to remember:

- Calibration is simply the “comparison” of the unknown reading of a UUC to a known reading of a Reference Standard, also known as the Master.

- Verification is a process of “confirming” that a given specification is fulfilled.

- Validation is for “ensuring” the acceptability of the implemented measurement process. Focusing on the final output of the measurement process.

To learn more about their differences, including a concrete example, visit my other post at this link>>

Differences between Calibration, Verification, and Validation in the Measurement Process

We should also understand when to use “calibration or verification” in our measurement process, whether to calibrate to verify or both. check out this link.>> When To Perform Calibration, Verification, or Both?

..

Measurement Uncertainty

What Does Measurement Uncertainty Mean?

Calibration is not complete without Measurement uncertainty or Uncertainty of Measurement. This is where the unbroken chain of comparison is being connected or linked.

What does uncertainty mean in calibration? Measurement Uncertainty is the value being displayed to quantify the doubt that exists on a specified measurement result. Since no measurement is exact, there is always an error that is associated with every measurement. To determine the degree, effect, or quantity of this error that exists in every measured parameter, we compute or estimate Measurement Uncertainty.

During uncertainty computation or estimation, we identify all the valid sources of errors that influence our measurement system. It can be from our procedures, instruments, environment, and many more. We evaluate and quantify the value of each error and combine them into a single computed value.

What is the Use of Measurement Uncertainty (MU) in Measurements?

You might just be wondering why Measurement Uncertainty is reported in the calibration certificate. The following are the important uses of measurement uncertainty:

- MU is used for conformity assessments, an important factor in generating a decision rule.

- Use for calculating the TUR (Test Uncertainty Ratio) of Instruments to determine their suitability to be used in a specific measurement process.

- MU is evidence of the traceability of an accredited lab or any calibration performed.

- MU uncertainty shows how accurate your instrument is. A smaller uncertainty value means more accurate results.

- If you do not have a basis for your tolerance, MU can be used as your tolerance.

- If you are calculating your own measurement uncertainty, MU in the calibration report is used as the main contributor to be included in the uncertainty budget.

Where Can we find measurement uncertainty? We can find measurement uncertainty results in the calibration certificates usually on the data results page.

Visit my other posts about measurement uncertainty to learn more in this link >> 8 Ways How You Can Use the Measurement Uncertainty Reported in a Calibration Certificate

Learn how to calculate measurement uncertainty for an analog pressure gauge in this link >>Measurement Uncertainty for Analog Pressure Gauges (GUM Method)

Difference Between Measurement Uncertainty and Tolerance

Measurement Uncertainty (MU)

Usually defined as the quantification of the doubt. If you measure something, there is always an error (a doubt – no result is perfect) included in the final result since there are no exact measurement results.

Since there are no exact measurement results, what we can do is to determine the range where the true value is located. This range can be determined by adding or subtracting the limits of uncertainty (or the measurement uncertainty result) to the measurement result.

We do not know what the true value is, but because of the measurement uncertainty result, it will show us that the true value lies within the limits of the calculated measurement uncertainty.

“The smaller the measurement uncertainty, the more accurate or exact our measurement results.”

For example, based on the calibration certificate of a pressure switch, it has a measurement result of 10 psi with a calculated measurement uncertainty of +/- 0.3 psi.

As we can see, it has an exact value of 10 psi, but in reality, the exact value is located between the range 9.7 to 10.3 psi’.

Tolerance

It is the maximum error or deviation that is allowed or acceptable as per the design of the user for its manufactured product or components.

If we perform a measurement, the tolerance value will tell us if the measurement we have is acceptable or not.

If you know the tolerance, it will help you answer questions like:

1. How do you know that your measurement result is within the acceptable range?

2. Is the final product specification pass or fail?

3. Do we need to perform adjustments?

“The bigger the tolerance, the more product or measurement results will pass or be accepted.”

For example, a pressure switch is set to turn on at 10 psi. The process tolerance limit is 1 psi. Therefore, the acceptable range for the switch to turn on is between 9 to 11 psi, beyond this range, we need to perform adjustments and calibration.

See more explanation and presentation in this link >> Differences Between Accuracy, Error, Tolerance, and Uncertainty in Calibration Results

..

ISO 17025 – Calibration Laboratory Quality Management System

What is ISO 17025?

-

- International Standard ISO/IEC 17025:2017, General requirements for the competence of testing and calibration laboratories

- International Standard ISO/IEC 17025:2017, General requirements for the competence of testing and calibration laboratories

- As the title implies, it is a standard for laboratory competence, to differ from ISO 9001, which is for certification.

- It is an accreditation standard used by accreditation bodies where a demonstration of a calibration laboratory’s competency is assessed with regard to its scope and capabilities. Accreditation is simply the formal recognition of a demonstration of that competence.

- It is also a Quality Management System that is comparable to ISO 9001 but it is designed for Calibration Laboratories, specifically 3rd Party or External Calibration Labs.

- The usual contents of the quality manual follow the outline of the ISO/IEC 17025 standard.

- It can be divided into two principal parts,

1. Management System Requirements– similar to those specified in ISO 9001:2015, primarily related to the operation and effectiveness of the quality management system within the laboratory.

Some of the requirements include:

- Internal audit

- Document and record control of the management system documentation.

- Handling of complaints

- Control of Non-conforming calibration works

- Implementing corrective actions

- Monitoring improvements

- Maintaining Impartiality and confidentiality

- Management review meeting

- Risk Analysis for Improvements

2. Technical Requirements – include factors that determine the correctness and reliability of the tests and calibrations performed in the laboratory. Some of these factors are personnel competency, facilities and environmental conditions, equipment, calibration methods, reporting of results, and measurement uncertainty calculations.

- These requirements are implementing systems and procedures to be met by testing and calibration labs in their organization and management of their quality system, particularly when seeking accreditation.

- Since ISO 17025 is a quality management system specifically for calibration laboratories, it is also a good tool or a guide if you are managing an in-house or internal lab. Following its requirements will help you achieve most of the auditors’ requirements during internal or customer audits.

To learn more about the requirements of ISO 17025:2017, visit my other post at the link below:

ISO/IEC 17025:2017 Requirements: List of Documents Outline and Summary

Learn the basic elements regarding In-House Calibration Management Implementation by visiting this link. >>> ELEMENTS IN IMPLEMENTING IN-HOUSE CALIBRATION.

Thank you for visiting. Please subscribe and share

You can also connect with me on my Facebook page.

Best Regards,

Edwin