With the pandemic that we have experienced, affecting every industry in the world, one strategy to avoid an overdue instrument and ensure an updated calibration program is to extend the calibration due date or calibration interval of our instruments.

But doing this needs a planned strategy to still ensure the confidence and reliability of our instruments. This strategy is to implement a calibration interval analysis procedure.

Calibration interval or frequency of calibration is one of the most asked questions. Below are the actual questions that I received:

1. Is a recalibration a requirement?

2. How can we do interval analysis and how long can we extend?

3. Is there a standard that controls the interval between each calibration process?

4. Is there a standard guide that is set to be followed?

5. Is there a set of rules for the frequency of calibration of an instrument?

6. It is difficult to understand the logic behind the 12-month interval, shouldn’t this depend on the number of usages?

I have read a lot of documents online, and honestly, most are complicated and very technical for a beginner to understand. I will present the simplest and the one that makes more sense to me and I hope you can understand it as well.

In this post, I will present a simple method with an example that you can follow as a guide in order to analyze and provide clear details about why you need to extend or reduce the calibration interval of your instruments.

Furthermore, I will also present the following:

1. What is a calibration Interval?

2. Why Do We Need to Determine Calibration Frequencies of Instruments

3. How to Determine Calibration Interval of Instruments

a. 4 Major Objectives For the Implementation of Calibration Interval Analysis

b. How to Establish the Initial Calibration Interval

c. How to Determine the Fixed Calibration Interval

> Example of Calibration Interval Calculation of Pressure Test Gauge – Method Implementation Procedure

4. References Guides that We can Use to Perform Other Methods of Analysis

5. Some Techniques that I observe others are doing in relation to calibration Interval

6. Conclusion

I hope that in this post, all those questions will be answered.

Let us start with the basics, Continue to read on….

Calibration Interval Definition – What is A Calibration Interval?

Definitions of calibration interval come in many terms, these terms are calibration frequency, calibration period, or simply calibration due date. And to define it more, calibration interval is the number of days between scheduled calibrations.

It will answer the question,” How often should we calibrate our instruments?” Or, “How long before we calibrate our instruments again?”

Having a calibration interval is a must. Saying this, laboratories are not allowed to give a calibration frequency. The calibration interval as per ISO 17025 standard, under clause 7.8.4.3, states that “A calibration certificate or calibration label shall not contain any recommendation on the calibration interval, except where this has been agreed with the customer.”

One of the reasons is that once the instrument is out of the lab, the lab has no control over it, therefore the calibration interval is not guaranteed.

The user should determine what are Calibration Intervals needed for his instruments. This will now become part of the In-house Calibration program that should be properly documented.

Any instruments that will not fall under the calibration Interval analysis may fall under the ‘calibration-not-required’ status. Check my other post in this link >> calibration-not-required implementation

Some Reasons Why We Need to Determine Calibration Frequencies of Instruments

We need to determine the Calibration Frequency in order to satisfy below reasons:

1. To save cost. – by calibrating less frequently because of a longer calibration interval but ensuring reliability.

2. To satisfy requirements of customer or regulatory bodies

3. The need to perform recalibration –The reason why we “calibrate” also applies to why we “recalibrate”

a. The need to recalibrate. Below are some technical reasons why we recalibrate:

> Because of drift

> To detect any calibration problems before it affects quality

> Exposure to harsh environment

> Over usage

> To assure accuracy and reliability

> To ensure traceability

4. Compliance to the Requirements of ISO Standards – under ISO 17025:2017 and ISO 9001:2015 Standards which controls and requires calibration interval analysis

a. Requirements for Calibration Frequency as per ISO 17025:2017 Standards are for:

i. Establishing calibration program for calibrated Instruments

> As per clause 6.4.7 The laboratory shall establish a calibration program, which shall be reviewed and adjusted as necessary in order to maintain confidence in the status of calibration.

ii. Monitor Validity of results

> As per clause 7.7.1 The laboratory shall have a procedure for monitoring the validity of results. g) retesting or recalibration of retained items;

iii. For documentation as part of Technical Records,

> As per clause 6.4.13 Records shall be retained for equipment that can influence laboratory activities. The records shall include the following, where applicable: e) calibration dates, results of calibrations, adjustments, acceptance criteria, and the due date of the next calibration or the calibration interval;

b. Related Calibration Interval Requirements under ISO 9001:2015, as per clause 7.1.5.2 Measurement traceability

i. When measurement traceability is a requirement or is considered by the organization to be an essential part of providing confidence in the validity of measurement results, measuring equipment shall be: a) calibrated or verified, or both, at specified intervals, or prior to use, against measurement standards traceable to international or national measurement standards;

ii. The organization shall determine if the validity of previous measurement results has been adversely affected when measuring equipment is found to be unfit for its intended purpose, and shall take appropriate action as necessary

If you read these requirements, there are no specific guidelines for the determination of calibration intervals that are required to follow for calibration interval analysis or method, therefore, any methods that work for you are ok. But it is better to have published documents or guides as a reference like the ILAC G24 which is free to download.

How to Determine Calibration Interval of Instruments

There are 2 types of calibration intervals that we need to complete here, these are:

- Initial Calibration Interval

- Final or Fixed Calibration Interval

First, let us determine some main objectives for implementing calibration interval analysis.

4 Major Objectives For Implementing Calibration Interval Analysis

- To designate Initial Calibration Intervals – this is the starting calibration interval based on experience and recommendations.

- Use to extend interval of calibration – this means that a given calibration interval will be extended for a specific period until a fixed interval is reached. This is also applicable like for example during this pandemic where calibration is difficult to access and we want to extend a little more temporarily.

- Use to reduce interval of calibration – this is applicable when we encounter out of tolerance where calibration is reduced as per implementation rule. See below example presentation

- Use to determine the fixed calibration interval (final interval) – this is the main calibration interval that we need to achieve based on actual use which should be adequately justified

How to Establish the Initial Calibration Interval

Initial Calibration Interval means an interval that we used initially as per the decision of the expert, this should not be the final calibration frequency to be used, this is just our starting point. Since we do not have yet data to justify this interval (in most cases for start-ups), it is known as ‘Engineering Intuition’.

I will quote this statement from ILAC G24, It states:

“The so-called “engineering intuition” which fixed the initial calibration intervals, and a system which maintains fixed intervals without review, are not considered as being sufficiently reliable and are therefore not recommended. “

The decision on where to base the initial calibration interval depends solely on you as the user. These could be based on below criteria:

1. Manufacturer Requirements – recommended by manufacturer

2. On the frequency of use – the more it is used, the shorter the calibration interval

3. Required by the regulatory bodies (example: required by the government)

4. Past experience of the user with the same type of instrument

6. Based on the criticality of use. – more critical instruments have higher accuracy or very strict tolerance, therefore shorter calibration interval

7. Customer Requirements

8. Conditions of the environment where it is being used.

9. Published Documents

Initial calibration intervals in some cases could become the ‘fixed/final calibration interval’, considering that we already have evidence to justify why we decide on this calibration interval for a specific instrument. I will share 1 method to be used for justification.. Just continue reading.

Remember that the above choices are the basis for an initial interval, our job is not finished here yet. The next move is to determine or to establish the final or ‘fixed Interval of calibration’ using a specific method or procedure. And this is where the method that I will present here will be used.

How to Determine the Fixed Calibration Interval

My purpose here is to present and help you understand the calibration interval analysis procedure that you can implement if it suits your needs.

A disclaimer should be noted. These are my implementation based on my understanding and experience, it may be different from your situation so a proper review should be given. It is your responsibility as the user to evaluate the effectiveness of this method if you implement and take responsibility for the consequences of the decisions taken as a result of using this method.

Now let us start…

I will present here a modified method based on the principle of a control chart and a calendar-time method as per ILAC G24 (OIML D 10:2007). I will call this method the ‘Floating Interval Method’ because it can increase or decrease in a given condition.

To establish a fixed interval, below is the calibration interval analysis procedure:

1. Gather historical records of the instrument using its calibration report for the past 2 years or more.

2. Choose at least 3 readings that are mostly used, for example, the min, middle, and max to determine stability or drift.

3. Use excel to summarize the readings.

4. Using the specifications and the calibration results, create a chart with control limits (a control chart)-see below the table using excel (there are many tutorials about control chart in Google that you can follow).

5. Understand and analyze the trend.

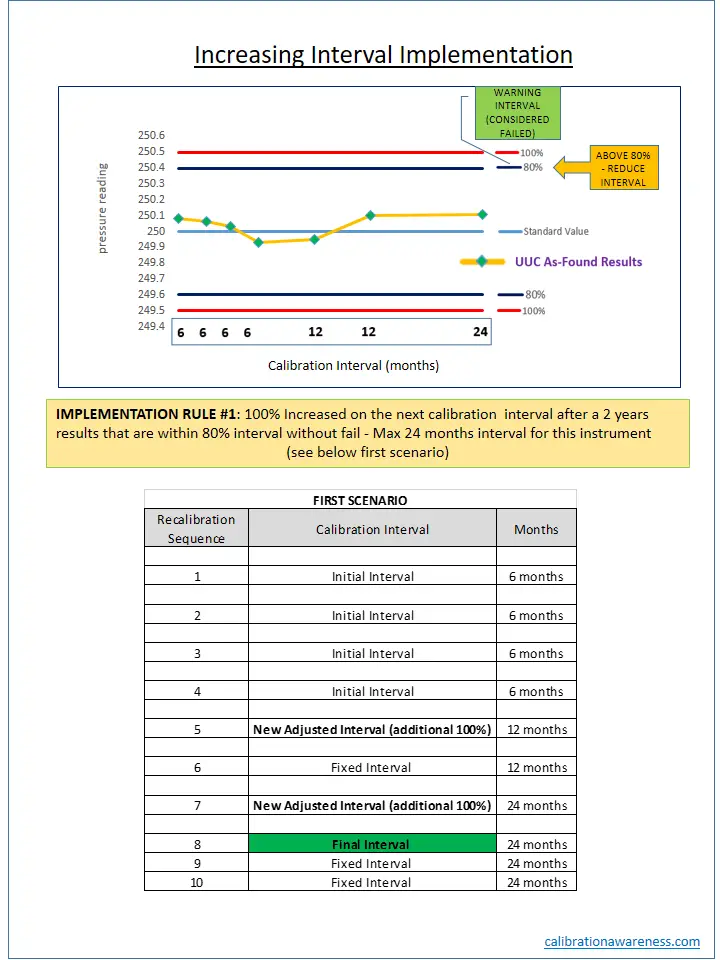

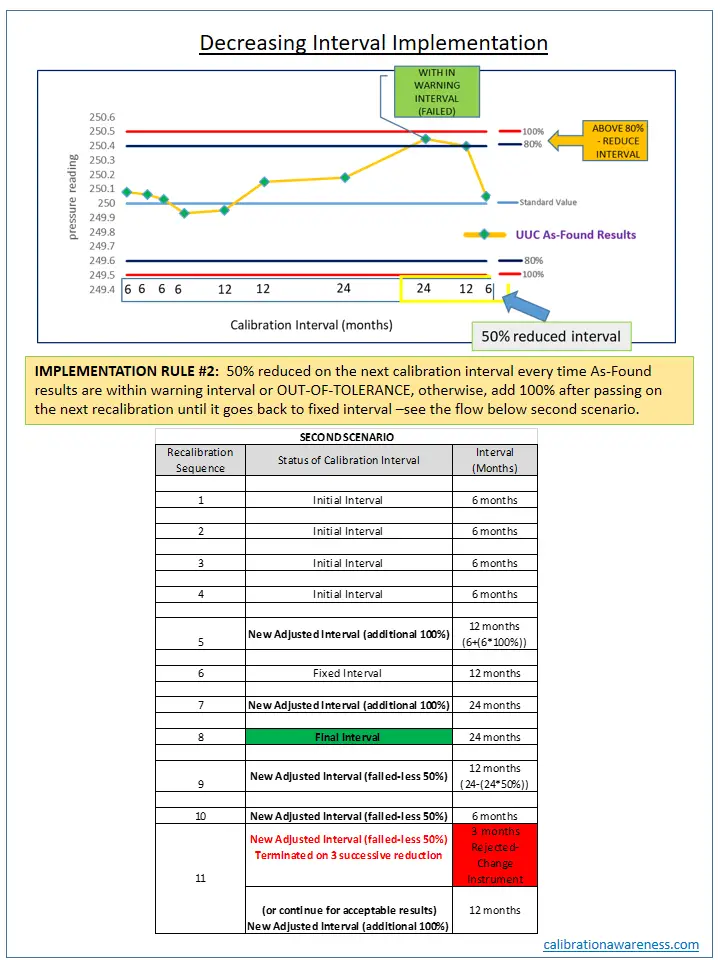

> Based on the trend, you can decide whether to increase or decrease the calibration interval based on the Implementation Rule that you created for the Floating Interval Method. (See below presentation)

a. Implementation Rule #1: 100% Increased on the next calibration interval after the 2 years if results are within 80% – Max 24 months interval

b. Implementation Rule #2: 50% reduced on the next calibration interval every time As-Found results are within warning interval or OUT-OF-TOLERANCE (and adding back the 50% after passing on the next recalibration) –see the flow below second scenario.

6. Determine the final interval.

Example of Calibration Interval Calculation of Pressure Test Gauge – Method Implementation Procedure

To understand better, below is an example. The instrument is a Pressure Test Gauge. We will establish its fixed calibration interval using the method ‘Floating Interval Method’ (I just made this up, you can call it anything you want)

Assuming you have a test gauge that is calibrated every 6 months as an initial interval. Then after 2 years, you have generated a performance history of 6 calibration reports.

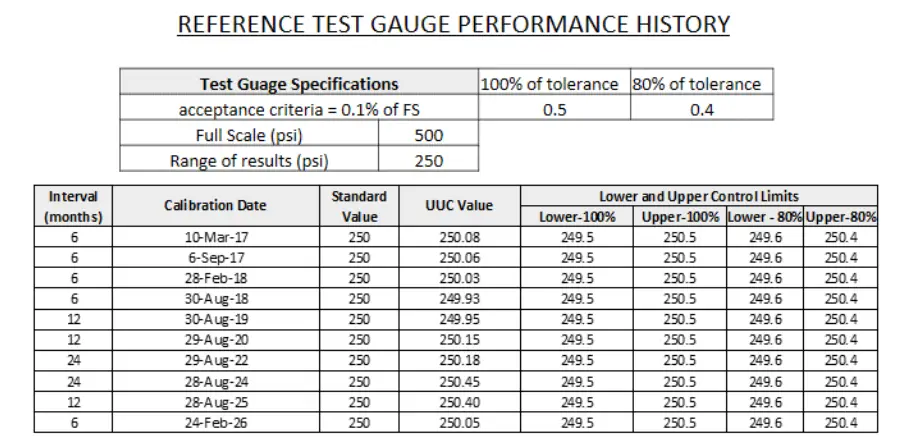

We can now use the results to analyze the stability or drift. We will choose 3 test points, the min, mid and max ranges. But in this example, I will plot only the mid-range using MS Excel (If you have special software to perform this, it is much easier).

Again, for simplicity, I will use the manufacturer’s specifications as the tolerance limit.

A. For steps 1 to 3 above. The first four 6 months is the data for 2 years. These are the basis of the fixed interval analysis. I only choose mid-range to simplify the presentation.

B. For steps 4 to 6, see the below presentation. These will show on “HOW TO INCREASE OR DECREASE A CALIBRATION INTERVAL’

CHART INTERPRETATION – INCREASING AND DECREASING INTERVAL

As a basis for increasing the calibration interval, if you observed that the readings are within the control limits you specified, it means that the instrument is stable. Based on your history, the Test Gauge is stable for 2 years, as per the implementation rule, we can extend it for 1 year up to a maximum of 24 months (this is as per my implementation rule, you can adjust it but don’t overdo it.)

On the opposite side, if readings failed as per the As-found Data, then you need to decrease it. As per my decision, I will decrease it by 50%. This is I believe the most suitable range. See the below presentation.

Please take note that this is not a one size fits all method. You can change or adjust based on your own needs and implementation rule.

This will tell us the importance of recording the history of the performance of our instruments for this purpose. Past records are used to justify why we extend or reduce calibration intervals.

I also want to emphasize that I did not include the Measurement Uncertainty as part of the Decision Rule to make the presentation much simpler.

Reference Guides for Other Methods of Analysis

There are no specific standards that provide the ‘exact’ interval to be followed as a basis for a calibration interval of any instruments in a calibration lab. All publications and guides are only recommendations and are usually optional to follow or implement unless required by a regulatory body.

For those who want to explore more regarding other methods, check on below free guides:

- ILAC-G24 Guidelines for the determination of calibration intervals of measuring instruments

- GMP 11 Good Measurement Practice for Assignment and Adjustment of Calibration Intervals for Laboratory Standards

- 5 Best Calibration Interval Guides

Some Techniques I Observe Others Are Doing in Relation to Calibration Interval

- They include a ‘calibration interval tolerance’. I smiled at first when I heard this but it’s true, the calibration interval has a tolerance limit. An example is a calibration interval of 1 year +/- 2 months.

- An instrument that is being kept for a longer period and is calibrated only every time it is used, or before it will be used for measuring.

- Some instruments have no calibration due date at all, considering that this kind of instrument is calibrated before use in everyday operation.

- There are calibration intervals based on the number of times the instrument is used. For example, we will only calibrate an instrument after it is used 500 times.

As you can see, there is no one exact solution that fits all, it all depends on the user. The important thing is we have a procedure to follow that is properly planned with justifications or proper documents as evidence why we choose such a method.

You can tweak or add additional details or criteria to suit your needs. You can gather most methods from reference guides and combine each of them. It is up to you how you can create the best calibration interval analysis program for you.

Conclusion

Determining the calibration interval is one of the main requirements when it comes to instrument maintenance and quality control. And as per my exposure to different users, this is one of the most asked topics.

In establishing a Calibration interval, there are 2 main steps that we need to follow, first is to establish the Initial Interval, the second is to determine the Final or Fixed Calibration Interval as we progress. Whether we choose the initial interval as the fixed interval or establish a new fixed interval is ok, the important thing is we need to have data to justify our decisions. There should be a method that we follow in order to implement this. I call the method in this post, the ‘Floating Interval Method’.

In this article, I have presented:

1. What is a calibration Interval?

2. Why Do We Need to Determine Calibration Frequencies of Instruments

3. How to Determine Calibration Interval of Instruments

a. 4 Major Objectives For the Implementation of Calibration Interval Analysis

b. How to Establish the Initial Calibration Interval

c. How to Determine the Fixed Calibration Interval

> Example of Calibration Interval Calculation of Pressure Test Gauge – Method Implementation Procedure

4. References Guides that We can Use to Perform Other Methods of Analysis

5. Some Techniques that I observe others are doing in relation to calibration Interval

If you have any concerns about this method, please feel free to comment, this is not a perfect procedure but I believe it is a good start to understand the principle behind the analysis.

If you have your own method, please share by commenting below.

If you liked this article, please share it on FB, and subscribe to my email list..

Thanks and regards,

Edwin

81 Responses

ARTURO ORTIZ VARGAS MACHUCA

Dear

Thank you very much for the article

A query is missing placing uncertainties

Inquiries

1.- Instead of those limits of 100% and 80%, you can place the limits of a control chart graph

2.- For the calculation you can use the intermediate checksA query is missing placing uncertainties

Inquiries

edsponce

Hi Arturo,

You are welcome.

Yes, you are correct for the limits. The limits as per the control chart is the second method under ILAC G24. We can also implement it as you have suggested.

We can also use include the uncertainty results as per the decision rule of ISO 17025 to make our analysis procedure more strict. Good point for the intermediate checks to acquire more data as the basis for analysis.

I appreciate your comments.

Edwin

Arturo Ortiz

Thanks

edsponce

You are welcome.

Amiel Eleazar Macadangdang

HI Sir Edwin,

Thank you for sharing this. I can now start to plan a good calibration program for our laboratory.

-Amiel

edsponce

Hi Amiel,

You are welcome. Good luck with the implementation of calibration interval procedure on your calibration program.

Edwin

abdelkarim mezghiche

thank you very much, clear and concise

edsponce

Hi Abdelkarim,

You are welcome. Thank you for reading.

Wajdi adnan

Thank you Mr. Edwin long live your hands support for these clarifications.

edsponce

Hi Wajdi,

You are welcome. I am glad you liked it.

Edwin

Zainal Abidin

Thank for your knowledge sharing …..

edsponce

You are welcome Zainal. Thank you for reading.

Best regards,

Edwin

sharifa

Thank you Edwin. This is very good information

edsponce

Hi Sharifa,

You are welcome. Thank you for reading.

Thanks and regards,

Edwin

Rose Gaffar

6.2.2 The laboratory shall document the competence requirements for each function influencing the results of laboratory activities, including requirements for education, qualification, training, technical knowledge, skills and experience.

How do you show evidence for the above? It is through multiple of things? Training binders? Job description? Or a specific document with the above?

edsponce

Hi Rose,

It is through multiple things. All the things you mentioned are acceptable. The important thing is it is documented and available when an auditor will ask for it.

Through training certificates, recorded experiences recorded qualifications and performance reviews which are properly filed and updated.

Thanks and regards,

Edwin

Vishwajeet Patil

Hello Sir,

100 % tolerance 0.5 mm and 80% tolerance 0.4 mm on which basis these tolerances are considered to generate the control limits.

Regards,

Vishwajeet

edsponce

Hi Vishwayeet,

100% is the full tolerance limit. The 80% is from ILAC G24, this is optional, you can set your own tolerance based on your preference. You can widen or shorten it. this is based on how strict you are with your implementation.

I hope this helps,

Edwin

Paul

0.5 PSI and 0.4 PSI, it is a pressure gauge used in the example, the units are pounds per square inch not millimetres

Vishwajeet Patil

Hi, Edwin, Thanks for reply. But by using which tolerance i should generate control chart limits. If i said Vernier Caliper.

should i take product tolerance that we are inspecting by Vernier caliper or least count of Vernier caliper as a tolerance.

please guide me.

Regards,

Vishwajeet.

edsponce

Hi Vishwajeet,

Product tolerance is too big to be used as tolerance. Use the manufacturer specifications or the MPE (maximum permissible error) of the caliper as the tolerance. Check the accuracy part of the Caliper if you have.

Thanks and regards,

Edwin

Vinod

Hi Edwin, thanks for your wonderful blog. One thing i want to know, you have taken the data from calibration certificate for initial 2 years (at interval of 6 months) but after these 4 measurement readings, how you calculated further reading and error, please specify.

edsponce

Hi Vinod,

All data are taken from the results of the calibration certificates. The first 6 months for 2 years are the initial calibration interval. Then based from this history, I extended the calibration interval to 1 year then increasing now as per the procedure I presented up to 2 years.

There is no need to calculate the error. Every time there is a new calibration certificate, just copy the data and plot it in the table and chart. Same as the first 6 months. Your goal is to determine that the calibration results are within the tolerance limits every time a calibration takes place. Because based on these results, you can determine if you extend or reduce the calibration frequency.

I hope this helps,

Edwin

Vinod

Hi Edwin,

Good Morning, understood but how much time data we should taken for increase or decrease the frequency. In our case, by default we do the calibration at yearly basis then if taken the data of 2 years is sufficient or need to take more data. And if measurement results found within tolerace then have we increase the frequency as 2 years instead of 1 year? Also one more doubt, we can reduce the interval for verniers and micrometers as 6 months instead of 1 year without any data study because these are regularly used and may times verniers error found excess due to droppage of the same.

edsponce

Hi Vinod,

2 years of data is ok, but the more the better. It means you have more proof for its stability or accuracy within a certain period. There is no set rule for this. As long as you can prove its stability and you have a good history, then you can extend more based on your analysis. But do not overdo it.

The regular usage and frequent dropping are already good data that tells you to decrease the calibration interval. But still, stability study is the best to have. You should perform a simple verification check if this is the case. You can also acquire data from your intermediate check.

Best regards,

Edwin

Vinod

Edwin Sir,

One more query, in case of reference equipment, what criteria we should follow for increase or decrease the frequency? Whether we have to take the accuracy spec of the reference equipment or other parameter like uncertainty? please explain…

Basically in NABL (Accreditation body in India) a document is available where frequency is mentioned for domain wise equipment but they also mentioning as Recommendation is based on present practice of NPL, India. However, laboratory may refer ISO 10012 and ILAC G24 for deciding the periodicity of calibration other than above recommendations.

edsponce

Hi Vinod,

Good day!

There is no single answer to this. This is based on the user’s decision on how strict you are with your implementation. For me, it is much simpler to use the accuracy specs of the standard. But you may also combine the specifications of the standard and its uncertainty if you choose to, I believe this is also better for wider tolerance. Use the RSS (Root Sum Square) method for this combination.

Another technique that you may implement is the tolerance based on the standard deviation from the principle of the control chart, where you can use the 2 and 3 standard deviations as the control limits.

The recommendation from NABL (or other trusted source) is ok, but you must use it only as an initial interval, then if you already have a good data, or history of performance, you have the option to increase or decrease or maintain the calibration interval based on your analysis. At least now, you already have good data to prove its accuracy at that chosen interval.

I hope this helps,

Edwin

Vinod

Hi Edwin Sir,

There is a requirement of NABL as mentioned below:

Long term stability data shall be generated by laboratories by preparation of control /trend charts based on successive calibration of their standard(s)/master(s) (preferably without adjustments)*.

This shall be established by laboratories within two years based on minimum of four calibrations from the date on which laboratories apply for NABL accreditation. For the accredited laboratories, this shall be established within a period of two years w.e.f. the date of issue.

The laboratories may need to get their standard(s)/master(s) calibrated more frequently to generate the stability data within the above stipulated time.

Till two years, the stability data provided by the manufacturer of the standard(s)/master(s) can be utilized for estimation of uncertainty.

In case the stability data from the manufacturer is also not available, the accuracy specification as provided by the manufacturer can be used. However, the manufacturer’s data will not be accepted after the two year period as mentioned above since the laboratories are expected to establish their own stability data by that period.

*In cases where the standard(s)/master(s) are adjusted during its calibration pre-adjustment data needs to be used for the preparation of control/trend charts.

Please explain how can we do it and what will be acceptance criteria.

Regards

Vinod Kumar

edsponce

Hi Vinod,

NABL is requiring you to include the stability data for the calculation of measurement uncertainty.

If the manufacturer has provided the stability data in their specifications, then you can use that directly or the accuracy specifications if stability is not given. But only within 2 years until such time you have determined your own stability value.

To generate your own stability data, below are my suggestions.

For the first 2 years, you need to gather calibration results for a 6 months initial calibration interval. It means that you will have 4 calibration results that you can plot in a trend/ control charts.

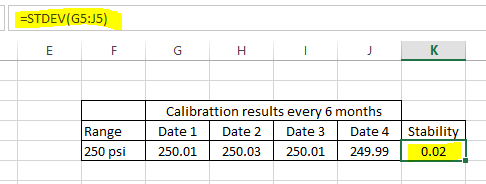

Stability can be calculated by the following steps:

1. Determine the range that you want to analyze, for example, based on my post, a pressure range of 250 psi.

2. In every 6 month period, you will have 4 calibration results. Take all the 250 psi results from the 4 certificates.

3. Plot them in excel then get the standard deviation. The standard deviation is equal to stability. See the example table below:

4. The stability results will be included in the uncertainty budget to calculate the uncertainty

You need this stability data to be included as part of the Measurement Uncertainty calculation. This will be one of the error contributions in the uncertainty budget.

The tolerance used for acceptance criteria is not needed to calculate stability but you need it to monitor stability when plotting in the control chart or trend chart. You may use the manufacturer specs for the acceptance criteria or determine the 2 and 3 standard deviations as the limits in the control chart. The value in the stability results, you just multiply it by 2 as the first limit (warning) and by 3 as the final limit (failed).

I hope this helps.

Edwin

Vinod

Thanks for your valuable suggestion, all requirement understood.

Initially we calculated the stability from 4 calibration results and set the limit as 2 std. dev. and 3 std. dev. for acceptability for future results. but for next calibration, the error should be within this control limit or further stability need to calculate. if stability need to calculate then need to consider all 5 calibration results including previous 4 calibration results or other mechanism. This will support us for better check the performance of equipment at successive calibration.

edsponce

That is great. The more calibration data you have, the better the stability results.

Keep it up.

Edwin

Vinod

Dear Edwin Sir,

Actually there was not clear understanding in your reply done in last comment. My query is that what should be acceptance criteria for control chart?

As you mentioned that accuracy provided by manufacturers can be used or can be used 2 and 3 standard deviation, let me know of which we need to calculate 2 and 3 std.dev.?

And as per requirement, if stability data is calculated for initially 2 years but what should be done after that time period? I mean in control chart how can we put the results for stability after 2 years of calibration results?

edsponce

Hi Vinod,

I am sorry for the confusion. I suggest you need to take some time to review the basics of a control chart. The 2 and 3 standard deviations (or 2 and 3 sigma) are the normal limits of a control chart. 2 being the warning and 3 will be a rejection point as an example.

When you construct a control chart in excel, every time there is a new calibration result after 2 years, just insert the new value in the table where you have listed the calibration results, then it will be added on the chart. The initial 2 years is just your basis, you can adjust it if you want if you have more results. The more results, the higher the confidence of the stability value.

Edwin.

Vinod

Thanks a lot Sir. You are great in calibration experiance.

edsponce

You are welcome. Happy to help.

Edwin

Vishwajeet Patil

Hi Edwin,

Thanks for sharing detail reply in past communication. one more query.

I want to analyze weighing balance machine to increase its calibration frequency which parameter i should consider during creation of control charts as i have used Max permissible error for Vernier caliper in same way.

please guide me.

Regards,

Vishwajeet

edsponce

Hi Vishwajeet,

You can implement the same principle with the vernier caliper. You can also look for the accuracy class of the weighing scale and use that as the MPE.

You can also follow the response I gave to Vinod about the stability before this comment.

Thanks and regards,

Edwin

Vishwajeet Patil

Thank you Edwin, this is great support.

edsponce

You are welcome Vishwajeet.

Rose

What is the difference between deviation and the nonconformity as it is outlined in the std in varies sections

edsponce

Hi Rose,

This is as per my observation.

Deviations occur based on the observations, feedbacks, and inspections during the implementation of the methods and procedures. If you are following or implementing a procedure or method, but something is lacking or a part of it is not followed, it will result in a deviation.

Some deviation can be measured or can be quantified to a numerical value where you can determine how far or near are you from that deviation.

Nonconformance follows as a result of not closing or addressing the deviations observed which are based on the requirements of a standard. Non-conformance is usually in a qualitative sense. It is expressed in a statement or word that is often used during audits or assessments.

I hope this makes sense.

Edwin

Obydulla

This is my 4 year data. now i am want to validate my calibration frequency .What should my calibration interval.? Thanks

Criteria Response Efficiency Check 100% Tolerence 80% Tolerence

Limit 85%-115% 15 12

Interval ( Months) Calibration Date Standard Value % Observeed Value Limit

Lower 100% Upper 100% Lower 80% Upper 80%

6 14.03.17 100 113.47 85 115 88 112

6 12.09.17 100 97.27 85 115 88 112

6 15.03.18 100 109.58 85 115 88 112

6 14.09.18 100 102.87 85 115 88 112

6 22.09.19 100 110.42 85 115 88 112

6 24.12.19 100 105.55 85 115 88 112

6 21.03.20 100 107.36 85 115 88 112

edsponce

Hi Obydulla,

I rearrange your data. See the photo for my recommendation.

Notice that there is an additional 1 year (100%) when it passes on the next calibration interval. The maximum extension is 24 months and stays there every time it has a ‘pass’ results and stays within the 80% limits.

I hope this helps,

Edwin

Leo

Hi Edwin,

Good day!

Thank you for sharing your expertise and experience in metrology. This article is very informative and provides me additional knowledge regarding calibration interval.

Btw, you’ve mentioned in your reply email last 15 Jun 2020, that ‘standard deviation’ is equal to ‘stability’. May I ask how to calculate ‘drift’ and ‘reliability’ which is also of great importance when determining calibration interval?

Thank you in advance!

Leo

edsponce

Hi Leo,

You are welcome. Thanks again for reading my article.

Drift is the change in output reading of instruments overtime at a specified period. It is simply the difference between the past result from the present result. See the example below:

I am not using any reliability calculations. But you can calculate reliability based on the number of out-of-tolerance encountered over the period of calibrations. Example: 1 out-of-tolerance in every 5 calibration cycles gives an 80 percent reliability ([(5-1)/5]*100 = 80%)

I hope this helps,

Edwin

Leo

Hi Edwin,

Thanks for your explanation. It’s very easy to understand. BTW, is there any word that is opposite of ‘drift’? Or we just say that the drift is ‘small’ or ‘large’.

Regards,

Leo

edsponce

Hi Leo,

You are welcome. Happy to know that my reply is easy to understand.

Regarding the drift, the opposite can be stable, (or no drift :-)). Yes, you can call it a small or large drift based on the results. Or just ‘drift’ because large or small is still a drift. Depends only if it is acceptable or not.

I hope this helps.

Edwin

Leo

Hi Edwin,

Thanks a lot!

You are great…keep it up!

Regards,

Leo

edsponce

Hi Leo,

You are welcome. Happy to help.

Best regards,

Edwin

Faheem haider

I like it.

edsponce

H Faheem,

It is good to know, thanks for reading.

Best regards,

Edwin

Chetan Raut

Hi Edwin,

The Concept is very Nicely Explained, Is there Any software in Which we can Monitor & Maintained all this Calibration data and analysis.

Chetan Raut

Hi Edwin,

Thank you for sharing your expertise and experience in Calibration. This article is very informative and provides me additional knowledge regarding calibration interval.

Is there any software which can manage & analyse all this calibration interval and provide calibration Intervals?

edsponce

Hi Chetan,

You are welcome and thank you for reading my post.

I did not try yet any software to automate this process. You can try to check Minitab.

Thanks and regards,

Edwin

Jason Lee

Hi Edwin, glad I came across with your article, it’s very informative. I have 3 questions,

1. How do you determine the calibration interval should be max interval of 24 months. I tried to check online or even ILAG G24 but didn’t manage to obtain such information.

2. I have a measurement instrument which measure a dimension of a wafer, say the spec of the wafer is 156mm +/- 1mm. If I adopt the “floating interval” method, meaning the 100% tolerance should be 1, 80% is 0.8mm?

3. Is there any meaning on the drift value? How does this drift value determine the calibration interval? as long as I fulfill the “implementation rule”.

edsponce

Hi Jason,

Please see my response after your questions.

1. How do you determine the calibration interval should be max interval of 24 months. I tried to check online or even ILAG G24 but didn’t manage to obtain such information.

Answer: Max 24 months is just my recommendation based on the limited data that I have. If you have a good history of your instruments where stability is observed for almost 5 years, you have now a good evidence to extend more than 24 months its calibration interval. As long as you have the supporting evidence for your decision then it is valid to extend more. But as I said, do not overdo it.

Most instruments with electronics components can be extended until 3 years as per my experience. Gauge Blocks and Std weights can reach until 5 years. But again, this depends on its performance and how it is being used.

2. I have a measurement instrument which measure a dimension of a wafer, say the spec of the wafer is 156mm +/- 1mm. If I adopt the “floating interval” method, meaning the 100% tolerance should be 1, 80% is 0.8mm?

Answer: Yes you are correct.

3. Is there any meaning on the drift value? How does this drift value determine the calibration interval? as long as I fulfill the “implementation rule”.

Answer: Yes, drift value has the same meaning with the error. In the implementation rule that I shared above, I use the error to determine stability performance, as long as this error is within the tolerance limit, then we conclude that the instrument is “stable”.

You can use the drift value the same way. You can replace the error value with the drift value.

As long as the ‘drift value’ stays within the tolerance limit that we set, we can say that the instrument is stable. Since it is stable at set period of time, this will show us that at this interval, it is safe to use as the calibration interval.

I hope this helps, thank for reading my posts.

Edwin

Eric

Hi Edwin,

Another good article! You’re really a big help for budding calibration professionals.

As for my idea for determining calibration intervals:

I was thinking of utilizing the intermediate checks, which we plan to do every three months. We just inject one, single value and read the measurement and calculate the error. After one year, we will have four readings (including the scheduled calibration). It will then be possible to extrapolate when the instrument MIGHT go out of tolerance. We could then choose an interval that comes before that date.

For example: If the data shows that the instrument may go out of tolerance after two years, we can specify an interval of one-and-a-half years.

We will be using linear extrapolation (easiest) but since things are not always linear, we use an shorter interval to play it safe. If the next set of data shows that we can extend further, we can do that.

As long as we have data to back up our decision, I think it will pass.

edsponce

Hi Eric,

Thank you.

Great idea! Yes I believe as long as you have a data to back up your method, then it is ok. The more data, the better.

Thanks for sharing.

Edwin

Karla

Hi I would like to know what the intervals between calibrations depend on?

edsponce

Hi Karla,

Below are some of the factors where the intervals between calibration depend on:

1. The frequency of use, the more you use, the lesser the calibration interval.

2. the drift and stability, the capability of the instrument’s output to stay within the control limits at a specified period.

3. If a regulatory body requires it.

4. The environmental conditions where the instruments are located. A harsh environment has an effect on the performance of the instruments.

I hope I answered your question.

Edwin

Maribel

Hola me gustaría contactarlo para consultarle dudas sobre un método de intervalo que tengo establecido pero me gustaría compartir el excel le dejo mi correo ojala poder contactarnos natybel_14@hotmail.com

Vinod Kumar

Hi Mr. Edwin, I am little bit not agree with your statement about UCL and LCL, actually these are auto calculated limits in control chart when we put the successive results. Whenever takes the equipment specification then these should be USL and LSL.

Please clarify the doubt

edsponce

Hi Mr. Vinod,

Yes, you have a point that is also correct since we based the tolerance limit on its specifications. You can do it that way if it would make much sense for you. I make it as UCL and LCL since I presented it as a control chart.

Thanks for sharing your thoughts.

Best regards,

Edwin

Joan

Thank you so much for sharing your expertise and knowledge in Metrology Ed. Even me who is not from this profession, understood very well (some abbreviations I didn’t understand, but I have a trustworthy friend who can help me on… wink’) Highly appreciated.

Keep up your legacy =) rolling!

edsponce

Hi Ms. Joan,

You are welcome!

Thank you for reading. I appreciate your comments, it gives me more motivation to share more.

Have a safe day!

Edwin

Alfred V. Lomat

Thank you so much Sir Edwin. It’s simple and clear to understand. Posting the links of your references means additional information for us! Keep it up and thanks again for sharing you knowledge.

edsponce

Hi Alfred,

You’re welcome. I appreciate your comments.

Have a safe day!

Edwin

Syaiful

Hi Edwin,

Thank you for the amazing article. However, I have some queries to ask. During each of my instrument calibrations, although there is a certain setpoint, the reference instrument temperature is not exactly the setpoint but my instrument is near the value of the reference instrument. And since my instrument accuracy very small, it caused out of limits. However, if I use the standard deviation method, I was able to obtain the values within the limits. Which would you recommend to use, manufacturer specifications or the stability method. Hope you can help me with this doubt. For example:

Interval Setpoint Reference Temp UUT Temp 100% Accuracy 80% Accuracy

12 -60 -60.123 -60.089 0.025 0.02

12 -60 -60.059 -60.022 0.025 0.02

12 -60 -60.160 -60.129 0.025 0.02

12 -60 -60.076 -60.046 0.025 0.02

12 -60 -60.025 -59.998 0.025 0.02

Regards,

Syaiful

edsponce

Hi Syaiful,

The accuracy specification is correct but that is only the specification of the RTD alone that is why it is very small, you need also to include the effect of the indicator used plus how well the sensor is set-up on the temperature source (the effect of axial or radial uniformity for example).

What I can recommend is to use the uncertainty results (expanded uncertainty) reflected in its calibration certificate as the tolerance limit because it is already the combined errors of the standard used, the accuracy of the RTD and the indicator used.

For the drift and/or stability, yes it is also ok to use as a basis to monitor calibration interval.

For the purpose of monitoring calibration interval, the 2 standard deviation is also ok as the control limit, then plotting it in the control chart.

Any of the above can be used for monitoring calibration interval. Just select one where you are comfortable with. For me, I will choose expanded measurement uncertainty as the control limits then use the ‘drift’ since it is based on the historical data where actual calibration results are used.

Let me know your thoughts.

Best regards,

Edwin.

Sintija

Hello,

Thank you very much for this well described guidelines.

I would like to hear your opinion on the next calibration dates.

Currently we are struggling with setting up a proper calibration due dates. What would you suggest to use: the first date of the calibration execution or the last date? As an example: interval: 1 year; calibration takes 1 month – from 31st of December until 31st of January. In my opinion we then should take a first day of calibration execution as calibration due date. As then we can guarantee that equipment is calibrated again within 12 months, but if we take the last day then it actually means 13 months.

Please advice. I was trying to find some references, but without success.

Looking forwards hearing from you soon!

Thank you very much in advance!

Have a nice day!

Yours sincerely,

Sintija

edsponce

Hi Sintia,

In terms of calibration date, what we usually do is to use the first day of calibration, usually, most instruments are calibrated within a day, but in some cases where calibration takes more than a day, it is safer still to use the Initial calibration date.

During monitoring, we just encode the calibration date written in the calibration certificate in excel, then excel will calculate the number of days automatically once you have indicated your calibration interval.

There are no exact references for calibration intervals, most are purely guidelines because the owner is the one more knowledgeable about the performance of its instruments. So as long as your choice of calibration interval is backed up by your own study and analysis, then it is ok.

I have included some references that you may read further at the end of my article.

I hope this helps, sorry for the late response.

Edwin

Wagner M

Hello there. Is there a way I can chat with you? I´m a rookie on the subject and want to know how to determine the control limits, especially for complicance with ISO 17025 standards.

edsponce

Hi Wagner,

Thanks for reading my article. Control limits can be determined based on the established “initial calibration interval” that I shared in this article.

You can message me on my Facebook page or email me at edwin@calibrationawareness.com.

Thanks and regards,

Edwin

Kuldeep

Hi,

Reading above comments and responses by you i am feeling confident that i will be attended in the same way with example and response. My query is with respect to Preventive Maintenance of Lab Instruments. Currently we are having a scheduled PM on Quarterly basis and now wants to extend it , but don’t know how and up to what interval. could you please help me with an example and reference tools to be use?

edsponce

Hi Kuldeep,

I appreciate the time to read my posts.

We have the same frequency of preventive maintenance execution in the past lab that I worked at but we did not develop or adjust the frequency since it is manageable on our part therefore my knowledge in this part is limited.

Adjustment of PM interval needs more documentation because same with Calibration interval, it has so many factors to consider like:

1. How frequent is the lab instruments are used

2. The time it takes to finish the PM

3. How critical the instrument is

4. The availability of the person who will perform the PM

5. any manufacturer recommendations

But as I see, analysis is also the same (same as my presentations above), you also need to prepare historical performance records of to support the adjustment of PM interval like:

1. Calibration or verification results-any out of tolerance encountered

2. Any malfunctions encountered during the period it was used.

The same with calibration interval adjustment, it is based on the knowledge and experience of the user, therefore, I am not aware of any standard reference that dictates the frequency of PM, it is up to you, the owner. The important thing is you need to ensure that records are maintained to support any decisions that you make.

For general reference about PM, you can refer to clause 6.4.3 of ISO 17025:2017 which states: “The laboratory shall have a procedure for handling, transport, storage, use and planned maintenance of equipment in order to ensure the proper functioning and to prevent contamination or deterioration.”

The only requirement is that we have a planned Preventive Maintenance that we follow as per the procedure we set and implemented.

I hope this helps,

Edwin

softbigs

Great article! I’ve been struggling to find a good way to increase the calibration frequency of my instruments. This article is a great starting point.

edsponce

Hi, you’re welcome. thanks for visiting my site.

Best regards,

Edwin

threadsGuy

Great article! I’ve been struggling to find a good way to increase the calibration frequency of my instruments. This article is a great starting point.

edsponce

Hi ThreadsGuy, I am glad you liked it. thanks for reading my post.

little Nightmares

I completely agree with the importance of calibration in ensuring accurate measurements. In our industry, we have noticed that many instruments are not calibrated frequently enough, which can lead to significant errors. By increasing the calibration frequency, we can improve the accuracy of our measurements and reduce the risk of errors. Great post!

SsYouTube

Great insights on calibration intervals! I appreciate the practical tips on increasing calibration frequency. It’s a reminder of how crucial it is to maintain accuracy in our instruments. Looking forward to implementing some of these strategies in our lab!