.

What is Thermo-Hygrometer?

Most are familiar with these instruments because if you have been to a hospital or even a mall these days, you can see it mounted in a wall. A thermo-hygrometer is an instrument used to measure and monitor temperature and humidity at a time, it is also known as Temperature Humidity Meter. It is a combination of a thermometer and a humidity meter or hygrometer.

Since both parameters affect each other, it is good that we can monitor them simultaneously. Some Thermo-hygrometers has a recorder included with a chart to properly display and monitor the readings. We are already familiar with a temperature which is the degree of hotness or coldness of a body or system, while humidity is the amount of moisture or water vapor in the air.

.

Why use a Thermo-hygrometer?

In our typical environment outside, when it is very hot and very humid, what do you feel? If you observed, you will perspire faster and that perspiration in your skin will not evaporate easily so you feel very sticky and uncomfortable. On the other hand, when it is very cold and low humid, our skin and lips are very dry, moreover, it also affects our breathing making our throats dry resulting in a cough.

But how do we know that temperature and humidity are low or high? It is through the use of a thermo-hygrometer. By monitoring temperature and humidity, a desired environmental conditions can be controlled and achieved. Thermo-hygrometer will monitor the environment and inform us what needs to be done so as not to affect our equipment and our process.

.

The Importance of Thermo-Hygrometer

There were lots of reasons why during calibration, environmental conditions must be accepted before starting a calibration work.

In dimensional calibration, the temperature is a big factor in maintaining an accurate measuring process. A gauge block, for example, will be affected by thermal expansion and this will result in an additional source of error.

In electrical systems, the presence of high humidity can result in moisture in which some electrical components particularly made of metals will get rusty or oxidized which can result in damage or malfunction.

On the other hand, an area environment with low humidity will cause high resistance in the air where a problem with the electrostatic discharge will be observed.

In some food industries, humidity is kept low to avoid molds from growing in their products and to maintain dryness and preserve its good quality. Others kept the temperature and humidity higher to grow bacteria to ferment their products.

And other applications that require humidity and temperature-controlled environments.

.

Where to use a Thermo-hygrometer?

Thermo-hygrometers are used especially in an area or room where temperature and humidity are critical in a process just like in laboratories, manufacturing areas, pharmaceuticals, food industries, and more. Critical in a way that it affects your process where continuous monitoring should be properly implemented. Some products or procedure also requires a certain temperature and humidity to be processed properly.

Please visit my other post to read more regarding this topic.Temperature-and-humidity-why-do-we-need-to-monitor

.

Why Calibrate a Thermo-hygrometer?

As for other instruments that are used for measuring, thermo-hygrometer should be calibrated to ensure accurate readings. Thermo-hygrometer has a sensor that can degrade and drift anytime so a regular calibration must be applied.

Some thermo-hygrometers or hygrometers can drift easily and cannot be adjusted. Once your Thermo hygrometer is calibrated, a calibration certificate will display the errors, and therefore, you can use it to apply corrections during measurements.

It is also advisable to monitor the performance by comparing it regularly to another calibrated thermo-hygrometer. This will ensure that drift can be detected immediately while it is within the calibration interval.

Regular calibration will also provide a good record for us to decide if we increase or decrease the calibration frequency we use.

A recommended calibration interval for thermo-hygrometers that are situated in a stable environment is 6 to 12 months.

.

How to calibrate a Thermo-hygrometer?

The standard way for a Thermo hygrometer and/or hygrometer calibration is to use a special chamber or a humidity generator, this is usually performed in a lab. The humidity generator can be varied to different ranges of humidity and temperature.

But in this post, we will focus on a single range calibration and we will perform the calibration in the location where the thermo hygrometer is being used.

Thermo-hygrometers have different types of sensors, but regardless of the sensor types, the procedure for calibrating them is mostly the same. As long as the sensors are accessible and intact, we can calibrate it with this simple method.

This method can be applied for the hygrometer calibration procedure. It is only the humidity that is calibrated.

It is purely a comparison between a standard or more accurate Thermo-hygrometer and a regular thermo-hygrometer which we call a unit under calibration (UUC) where adjustment is not required unless the instruments have the capability to be adjusted.

You can use this method at any ambient room temperature in an enclosed area where the environment is stable. This method is suitable for those thermo-hygrometers that are permanently installed in a location where removing them is not possible.

Also, this method is applicable when you are not requiring a very high accuracy of results or you have a wide tolerance for your process monitoring.

See for more details: https://en.wikipedia.org/wiki/Hygrometer

Let us go to our procedure below.

Objective:

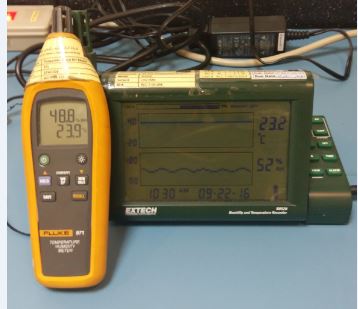

To define the verification procedure on thermo-hygrometers with a probe using a Fluke 971 as the reference standard. We will be using a single point calibration since the environment that we will measure is the existing environmental condition in a stable room.

Calibration Method:

This is accomplished by comparing the temperatures displayed from Fluke 971, which is the reference standard, on the display of the Unit Under Calibration (UUC) which is the Extech RH520A Humidity and Temperature Chart Recorder.

Click this link to see my review for Extech RH520A Humidity and Temperature Chart Recorder

Requirements:

- Warm-up time (UUC): At least 1 hour for proper stabilization

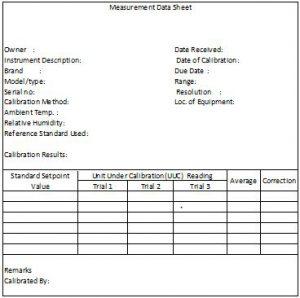

Sample Measurement Data Sheet (MDS) - Temperature: 23 +/- 5 deg C

- Humidity: 50 +/- 30%

- Measurement Data Sheet (MDS)

Reference standard to use:

- Temperature Humidity Meter Fluke 971

Other materials:

- Holder

- Cleaning materials

Calibration procedure:

- Check the thermo-hygrometer for any visual defects that can affect its accuracy. Discontinue calibration if any defect is noted.

- Clean the thermo-hygrometer with a soft cloth, carefully check the sensor for any signs of dust or contamination. Check if it has good batteries, replace low powered batteries.

- Prepare the measurement data sheet (MDS) and record all necessary details or information ( Brand, Model, serial #, etc).

- Calibration will be based on the room or ambient condition, so make sure that the room has a stable temperature. A confined space where air movements are very minimal is better.

- Place the Fluke 971 and the Unit Under Calibration(UUC) together, the sensors of both instruments must be close together and at the same level, use a holder if necessary. Allow the units to stabilize for at least 30 minutes. Temperature and humidity will be obtained in the same way.

- Once stabilized, get the readings on their display.

- Perform 3 times and record readings on the MDS.

- Check readings if within the accuracy defined by the manufacturer, for the example above ( Extech RH520A), accuracy = +/-3% for humidity and +/-1 DegC for temperature.

- If the readings are already within limits update the corresponding record, do labeling and sealing, and issue to the owner, otherwise, do necessary repairs or adjustment.

- End

To view more Thermo-hygrometers, kindly click this LINK.

Thank you for visiting this page. appreciate leaving your comment below and join my email list.

Edwin

25 Responses

Kevin

Hi Edwin,

your calibration procedure is very helpful. Just want to know several things for the calibration process. Is there any international standard that states the number of set point required for calibration proses and number of repetition for each set point?

Thank you.

edsponce

Hi Kevin,

Thank you for the positive comment. I have read a British Standard which details a calibration procedure that requires three set points of interest with three repetitions. You may check this standard, BS 1339-3:2004. It has an updated version but I did not check it yet. I appreciate if you can update me just in case you read the updated one.

Hope this can help.

Best regards,

Edwin

Gregor Mendell Rabang

Hi, what is the frequency of Calibration of this Hygrometers. Is calibration for every 2 years valid?

edsponce

H Gregor,

Thank you for reading my post. Usually, there are no set of rules for the frequency of calibration of an instrument. But the majority of the users have defaulted to a frequency of 1 year. This is based on experience and the stability of the environment where the hygrometer is placed and being used.

2 years frequency is valid considering that you have a history or a record of its past performance where it has a stable calibration record that you can rely on.

If you have a new hygrometer, and your budget can allow it, my recommendation is to start 6 months then increase your calibration frequency if readings are stable for every calibration until you reach 2 years and can stay there as your calibration interval. At this point, you are more confident that 2 years is good, you even have a justification if ever an auditor will question it.

I hope this helps, please do not hesitate to comment further.

Thanks and regards,

Edwin

rhea

hello good day! what is/are your reference/s for the procedure?

edsponce

Hi Ms. Rhea,

You may check this procedure under the British Standard-BS 1339-3:2004 section 9.4. It is not the latest version but you can read it there.

Hope this helps,

Edwin

hk combs

Hi

I am in a situation unusual to my normal field which is Mechanical HVAC and building controls exct. We are managing a building just over a year old. It is unique as it is a gallery/teaching center for the arts. The Gallery rotates very high end displays along with up and coming artists for all ages and around the world.

I have been asked to get base line humidity % and temps to confirm or dispute the accuracy of the devices already in place. I was pleased at first to see the fluke 971 getting an 55% average but in cross reference one being a humid-stat for a humidification system and the second being a near high end trusted stand alone I am not so sure. The other two devices are closer to each other then the readings from the fluke 971. The two to one ratio is a very questionable along with an average 12% deviation average. The humidity dead band is consistent on all the devices but the start points are not. The short Question is how can I solidly confirm which one is out of calibration?

edsponce

Hi HK,

Thank you for visiting my site.

You have performed a good verification of the humidity readings. But in order to have a valid verification, you need to have a good reference, in which the reference should be a calibrated instrument. In this way, any results you compare with the reference standard will tell you if it is acceptable or not.

If you are using the Fluke 971 as the reference, be sure that it is still within the calibration date (interval) with the calibration certificate at hand. Since it is calibrated, you are confident that it is reading accurately.

The next thing after you compare readings, check the calibration certificate, there is a chance that Fluke 971 has a correction factor that you can use. Apply the correction factor if applicable (check my related post here about correction factor). Also, be sure that when you performed the verification, the fluke 971 sensor is close to the sensors in question.

The 2:1 accuracy ratio is not a big deal as long as the verification result is acceptable to you or within the indicated tolerance.

Hope this helps

Edwin

Wayne

Very helpful document for validation of these devices. I’ve been searching high and low for a procedure to validate our units used in a medical lab (just environmental monitoring, not for analyzers), and this was the best I’ve managed to find. We use the CLSI guidelines extensively and they did not have anything for Hygrometers. We don’t calibrate these devices other than the original 17025 documentation provided but rather as annual *validation* of the UUT. Thanks for posting this this!!

edsponce

Hi Wayne,

You are welcome.

I am glad that this post has helped you in some way.

I appreciate your comments.

Thanks and regards,

Edwin

Praveen

Hi ,

I have a thermo-hygrometer , where i have calibrated the instrument at 10%Rh , 50%Rh and 95%Rh @25DegC , additionally i have calibrated at 10DegC , 50DegC temperatures points(Without Humidity).

My Question is does the instrument need to calibration of Humidity at different temperatures , because i am using this hygrometer in the climatic test of my product , where the test is conducted at 10DegC 10RH , so i need to calibrate the instrument at 10DegC 10RH ?

edsponce

Hi Praveen,

As much as possible the calibrated range should be the same with the user range or conditions of use. If you can generate the same range of temperature and humidity as your calibrator then much better. But if not, you can perform 2 ranges, a lower range, and a higher range that can cover your user range. Let say 5% and 15%, together with the temperature.

As I see, your calibration setpoints are already ok. You already covered your user range even if temperature is calibrated separately for 10 deg C. It is like using only a separate thermometer and a hygrometer which has a separate calibration.

I hope this helps,

Edwin

loadcells

All the things about the calibration of thermo hygrometer single point method are discussed over here. This article mentions and acts as such a moving trigger. It is an article worth applauding for based on its content. I am sure many people will come to read this in future.

Gizel

Hi! Can you cite a reference for this procedure? I am looking for a published procedure or method much exactly as you have shared. A method wherein a hygrometer (UUC) is calibrated by comparing value with a Reference Standard. Thank you very much!

edsponce

Hi Gizel,

You may check this reference guide under the British Standard-BS 1339-3:2004 clause 9.4.

I hope this helps,

Edwin

sam

hi how can I know what is the standard reading of the measuring equipment like hygrometer, thermometer and weighing scale?in our calibration procedure the unit with >15% humidity readings or > 5% temperature mas and lux readings of the reference standard are considered out of acceptable limits and should be replaced. but the auditor questioned us that one of our calibrated equipment which the the dial type thermometer is more than 5% in our standard readings . shoul i change the satndard reading? what it should be and how can i compute the standard reading?

edsponce

Hello Sam,

The standard reading is what you can see that is displayed on the reference standard. This is our reference value where we compare the readings of the Unit Under Calibration.

For example, you are using a reference thermometer to calibrate a dial type thermometer. The displayed value of the reference thermometer is 5, this is the standard value. Then you compare the reading of the dial thermometer, for example 4.

Based on the result, you have an error of 4-5= -1. If -1 is acceptable or within the tolerance limit then no need to adjust. But if not, then you need to adjust it and make it same as 5. In your case 5%, if this is too much, then you need to adjust your dial thermometer, or if it is not possible to adjust, put a note in the report to use a correction factor for that specific range.

Standard reading should not be changed because this is our reference value. What you can do for the reference value is to check if there is a correction on its calibration certificate that you need to compensate first before using.

For example the reference standard displayed value is 5, then check in its calibration certificate if it has a correction, if it has, for example -1, then deduct 1 from 5, 5-1=4. Now for is the final standard value.

You can read more here on how to use the calibration certificate and the correction factor>> https://calibrationawareness.com/how-to-properly-use-and-interpret-an-iso-17025-calibration-certificate

I hope this helps,

Edwin

Zaida

Good day! May I ask how up to how much correction factor can be considered to be still acceptable? When does it become absolutely essential to replace the unit? For example, one of our thermohygometers indicate a correction factor of +10% RH. Until when can we continue using this unit, until how much off can it be from the standard? Is there a maximum limit to using a correction factor? Thank you!

edsponce

Hi Zaida,

Thanks for reading my post.

There are no limits in using a correction factor because this is based on the acceptability of the user and how he understands the use of the instrument. As long as the data from the instrument is accurately applied to your process, then the usability of the instrument with correction factor is still ok.

The only problem comes when a different user that does not know how to use the correction factor or is not aware that a correction factor is needed before use, has used the instrument for calibration or measurement.

As per my experience, it is essential to replace the unit if:

1. Using correction factor is not practical if it has multiple ranges that may possibly confuse you and it is used regularly by different persons

2. It is stated in your procedure when an instrument is out of tolerance and cannot be adjusted.

3. If you observed that the correction factor is getting larger every time a recalibration takes place. There is a stability issue.

4. For more guidance, you might need to consult the specifications. Some instruments like pressure gauge is not advisable to use once the full scale reading is shifted more than 10% or its zero scale shifted to more than 25%.

I hope this helps,

Edwin

John

Hello Edwin,

Thank you for the good guidance, especially Nº 3 regarding stability!

edsponce

Hi John,

You are welcome.

Thank you as well for visiting my site.

Edwin

Husnul

Hi! Just want to reconfirm.. Does this means that we can set the correction factor for humidity ourselves? Or where can we refer the correction factor thing to verify that the device is passed to be used?

edsponce

Hi Husnul,

The correction factor can be seen or calculated if you have the calibration certificate. You cannot set a correction factor without the calibration results. The correction factor is added or subtracted from the measured value, therefore, making the results more accurate. To learn more about correction factors, I have a separate article in this link >> Correction Factor

I hope this helps,

Edwin

Mohamed

thank u sir this is amazing!

edsponce

You’re welcome, thanks for reading my post.

best regards,

Edwin